# ⚠️ Breaking

Many deprecated queue config options are removed (actually, they should

have been removed in 1.18/1.19).

If you see the fatal message when starting Gitea: "Please update your

app.ini to remove deprecated config options", please follow the error

messages to remove these options from your app.ini.

Example:

```

2023/05/06 19:39:22 [E] Removed queue option: `[indexer].ISSUE_INDEXER_QUEUE_TYPE`. Use new options in `[queue.issue_indexer]`

2023/05/06 19:39:22 [E] Removed queue option: `[indexer].UPDATE_BUFFER_LEN`. Use new options in `[queue.issue_indexer]`

2023/05/06 19:39:22 [F] Please update your app.ini to remove deprecated config options

```

Many options in `[queue]` are are dropped, including:

`WRAP_IF_NECESSARY`, `MAX_ATTEMPTS`, `TIMEOUT`, `WORKERS`,

`BLOCK_TIMEOUT`, `BOOST_TIMEOUT`, `BOOST_WORKERS`, they can be removed

from app.ini.

# The problem

The old queue package has some legacy problems:

* complexity: I doubt few people could tell how it works.

* maintainability: Too many channels and mutex/cond are mixed together,

too many different structs/interfaces depends each other.

* stability: due to the complexity & maintainability, sometimes there

are strange bugs and difficult to debug, and some code doesn't have test

(indeed some code is difficult to test because a lot of things are mixed

together).

* general applicability: although it is called "queue", its behavior is

not a well-known queue.

* scalability: it doesn't seem easy to make it work with a cluster

without breaking its behaviors.

It came from some very old code to "avoid breaking", however, its

technical debt is too heavy now. It's a good time to introduce a better

"queue" package.

# The new queue package

It keeps using old config and concept as much as possible.

* It only contains two major kinds of concepts:

* The "base queue": channel, levelqueue, redis

* They have the same abstraction, the same interface, and they are

tested by the same testing code.

* The "WokerPoolQueue", it uses the "base queue" to provide "worker

pool" function, calls the "handler" to process the data in the base

queue.

* The new code doesn't do "PushBack"

* Think about a queue with many workers, the "PushBack" can't guarantee

the order for re-queued unhandled items, so in new code it just does

"normal push"

* The new code doesn't do "pause/resume"

* The "pause/resume" was designed to handle some handler's failure: eg:

document indexer (elasticsearch) is down

* If a queue is paused for long time, either the producers blocks or the

new items are dropped.

* The new code doesn't do such "pause/resume" trick, it's not a common

queue's behavior and it doesn't help much.

* If there are unhandled items, the "push" function just blocks for a

few seconds and then re-queue them and retry.

* The new code doesn't do "worker booster"

* Gitea's queue's handlers are light functions, the cost is only the

go-routine, so it doesn't make sense to "boost" them.

* The new code only use "max worker number" to limit the concurrent

workers.

* The new "Push" never blocks forever

* Instead of creating more and more blocking goroutines, return an error

is more friendly to the server and to the end user.

There are more details in code comments: eg: the "Flush" problem, the

strange "code.index" hanging problem, the "immediate" queue problem.

Almost ready for review.

TODO:

* [x] add some necessary comments during review

* [x] add some more tests if necessary

* [x] update documents and config options

* [x] test max worker / active worker

* [x] re-run the CI tasks to see whether any test is flaky

* [x] improve the `handleOldLengthConfiguration` to provide more

friendly messages

* [x] fine tune default config values (eg: length?)

## Code coverage:

`HookEventType` of pull request review comments should be

`HookEventPullRequestReviewComment` but some event types are

`HookEventPullRequestComment` now.

minio/sha256-simd provides additional acceleration for SHA256 using

AVX512, SHA Extensions for x86 and ARM64 for ARM.

It provides a drop-in replacement for crypto/sha256 and if the

extensions are not available it falls back to standard crypto/sha256.

---------

Signed-off-by: Andrew Thornton <art27@cantab.net>

Co-authored-by: John Olheiser <john.olheiser@gmail.com>

Ensure that issue pullrequests are loaded before trying to set the

self-reference.

Signed-off-by: Andrew Thornton <art27@cantab.net>

Co-authored-by: delvh <leon@kske.dev>

Co-authored-by: techknowlogick <techknowlogick@gitea.io>

Some bugs caused by less unit tests in fundamental packages. This PR

refactor `setting` package so that create a unit test will be easier

than before.

- All `LoadFromXXX` files has been splited as two functions, one is

`InitProviderFromXXX` and `LoadCommonSettings`. The first functions will

only include the code to create or new a ini file. The second function

will load common settings.

- It also renames all functions in setting from `newXXXService` to

`loadXXXSetting` or `loadXXXFrom` to make the function name less

confusing.

- Move `XORMLog` to `SQLLog` because it's a better name for that.

Maybe we should finally move these `loadXXXSetting` into the `XXXInit`

function? Any idea?

---------

Co-authored-by: 6543 <6543@obermui.de>

Co-authored-by: delvh <dev.lh@web.de>

To avoid duplicated load of the same data in an HTTP request, we can set

a context cache to do that. i.e. Some pages may load a user from a

database with the same id in different areas on the same page. But the

code is hidden in two different deep logic. How should we share the

user? As a result of this PR, now if both entry functions accept

`context.Context` as the first parameter and we just need to refactor

`GetUserByID` to reuse the user from the context cache. Then it will not

be loaded twice on an HTTP request.

But of course, sometimes we would like to reload an object from the

database, that's why `RemoveContextData` is also exposed.

The core context cache is here. It defines a new context

```go

type cacheContext struct {

ctx context.Context

data map[any]map[any]any

lock sync.RWMutex

}

var cacheContextKey = struct{}{}

func WithCacheContext(ctx context.Context) context.Context {

return context.WithValue(ctx, cacheContextKey, &cacheContext{

ctx: ctx,

data: make(map[any]map[any]any),

})

}

```

Then you can use the below 4 methods to read/write/del the data within

the same context.

```go

func GetContextData(ctx context.Context, tp, key any) any

func SetContextData(ctx context.Context, tp, key, value any)

func RemoveContextData(ctx context.Context, tp, key any)

func GetWithContextCache[T any](ctx context.Context, cacheGroupKey string, cacheTargetID any, f func() (T, error)) (T, error)

```

Then let's take a look at how `system.GetString` implement it.

```go

func GetSetting(ctx context.Context, key string) (string, error) {

return cache.GetWithContextCache(ctx, contextCacheKey, key, func() (string, error) {

return cache.GetString(genSettingCacheKey(key), func() (string, error) {

res, err := GetSettingNoCache(ctx, key)

if err != nil {

return "", err

}

return res.SettingValue, nil

})

})

}

```

First, it will check if context data include the setting object with the

key. If not, it will query from the global cache which may be memory or

a Redis cache. If not, it will get the object from the database. In the

end, if the object gets from the global cache or database, it will be

set into the context cache.

An object stored in the context cache will only be destroyed after the

context disappeared.

The `commit_id` property name is the same as equivalent functionality in

GitHub. If the action was not caused by a commit, an empty string is

used.

This can for example be used to automatically add a Resolved label to an

issue fixed by a commit, or clear it when the issue is reopened.

Fixes#22391

This field is optional for Discord, however when it exists in the

payload it is now validated.

Omitting it entirely just makes Discord use the default for that

webhook, which is set on the Discord side.

Signed-off-by: jolheiser <john.olheiser@gmail.com>

Previously, there was an `import services/webhooks` inside

`modules/notification/webhook`.

This import was removed (after fighting against many import cycles).

Additionally, `modules/notification/webhook` was moved to

`modules/webhook`,

and a few structs/constants were extracted from `models/webhooks` to

`modules/webhook`.

Co-authored-by: 6543 <6543@obermui.de>

Co-authored-by: Lunny Xiao <xiaolunwen@gmail.com>

Change all license headers to comply with REUSE specification.

Fix#16132

Co-authored-by: flynnnnnnnnnn <flynnnnnnnnnn@github>

Co-authored-by: John Olheiser <john.olheiser@gmail.com>

When re-retrieving hook tasks from the DB double check if they have not

been delivered in the meantime. Further ensure that tasks are marked as

delivered when they are being delivered.

In addition:

* Improve the error reporting and make sure that the webhook task

population script runs in a separate goroutine.

* Only get hook task IDs out of the DB instead of the whole task when

repopulating the queue

* When repopulating the queue make the DB request paged

Ref #17940

Signed-off-by: Andrew Thornton <art27@cantab.net>

Co-authored-by: delvh <dev.lh@web.de>

Co-authored-by: Lunny Xiao <xiaolunwen@gmail.com>

This PR adds a context parameter to a bunch of methods. Some helper

`xxxCtx()` methods got replaced with the normal name now.

Co-authored-by: delvh <dev.lh@web.de>

Co-authored-by: Lunny Xiao <xiaolunwen@gmail.com>

The `getPullRequestPayloadInfo` function is widely used in many webhook,

it works well when PR is open or edit. But when we comment in PR review

panel (not PR panel), the comment content is not set as

`attachmentText`.

This commit set comment content as `attachmentText` when PR review, so

webhook could obtain this information via this function.

Co-authored-by: Lunny Xiao <xiaolunwen@gmail.com>

Co-authored-by: techknowlogick <techknowlogick@gitea.io>

_This is a different approach to #20267, I took the liberty of adapting

some parts, see below_

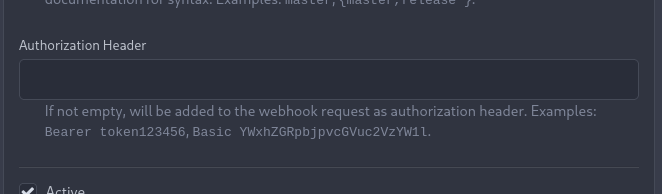

## Context

In some cases, a weebhook endpoint requires some kind of authentication.

The usual way is by sending a static `Authorization` header, with a

given token. For instance:

- Matrix expects a `Bearer <token>` (already implemented, by storing the

header cleartext in the metadata - which is buggy on retry #19872)

- TeamCity #18667

- Gitea instances #20267

- SourceHut https://man.sr.ht/graphql.md#authentication-strategies (this

is my actual personal need :)

## Proposed solution

Add a dedicated encrypt column to the webhook table (instead of storing

it as meta as proposed in #20267), so that it gets available for all

present and future hook types (especially the custom ones #19307).

This would also solve the buggy matrix retry #19872.

As a first step, I would recommend focusing on the backend logic and

improve the frontend at a later stage. For now the UI is a simple

`Authorization` field (which could be later customized with `Bearer` and

`Basic` switches):

The header name is hard-coded, since I couldn't fine any usecase

justifying otherwise.

## Questions

- What do you think of this approach? @justusbunsi @Gusted @silverwind

- ~~How are the migrations generated? Do I have to manually create a new

file, or is there a command for that?~~

- ~~I started adding it to the API: should I complete it or should I

drop it? (I don't know how much the API is actually used)~~

## Done as well:

- add a migration for the existing matrix webhooks and remove the

`Authorization` logic there

_Closes #19872_

Co-authored-by: Lunny Xiao <xiaolunwen@gmail.com>

Co-authored-by: Gusted <williamzijl7@hotmail.com>

Co-authored-by: delvh <dev.lh@web.de>

At the moment a repository reference is needed for webhooks. With the

upcoming package PR we need to send webhooks without a repository

reference. For example a package is uploaded to an organization. In

theory this enables the usage of webhooks for future user actions.

This PR removes the repository id from `HookTask` and changes how the

hooks are processed (see `services/webhook/deliver.go`). In a follow up

PR I want to remove the usage of the `UniqueQueue´ and replace it with a

normal queue because there is no reason to be unique.

Co-authored-by: 6543 <6543@obermui.de>

Fixes#21379

The commits are capped by `setting.UI.FeedMaxCommitNum` so

`len(commits)` is not the correct number. So this PR adds a new

`TotalCommits` field.

Co-authored-by: wxiaoguang <wxiaoguang@gmail.com>

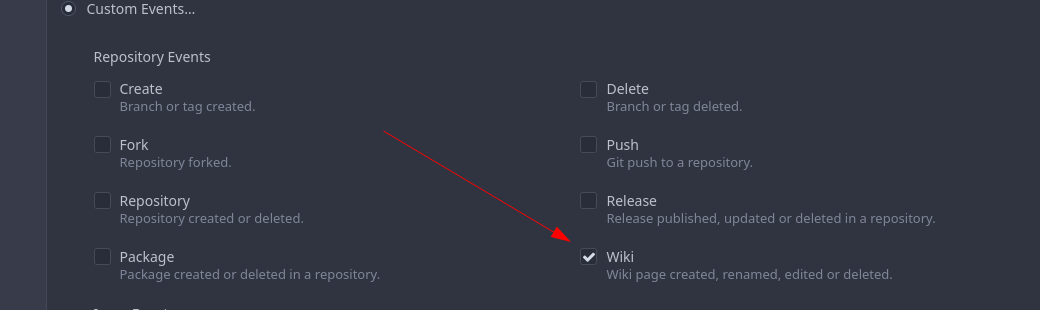

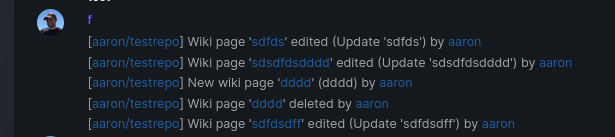

Add support for triggering webhook notifications on wiki changes.

This PR contains frontend and backend for webhook notifications on wiki actions (create a new page, rename a page, edit a page and delete a page). The frontend got a new checkbox under the Custom Event -> Repository Events section. There is only one checkbox for create/edit/rename/delete actions, because it makes no sense to separate it and others like releases or packages follow the same schema.

The actions itself are separated, so that different notifications will be executed (with the "action" field). All the webhook receivers implement the new interface method (Wiki) and the corresponding tests.

When implementing this, I encounter a little bug on editing a wiki page. Creating and editing a wiki page is technically the same action and will be handled by the ```updateWikiPage``` function. But the function need to know if it is a new wiki page or just a change. This distinction is done by the ```action``` parameter, but this will not be sent by the frontend (on form submit). This PR will fix this by adding the ```action``` parameter with the values ```_new``` or ```_edit```, which will be used by the ```updateWikiPage``` function.

I've done integration tests with matrix and gitea (http).

Fix#16457

Signed-off-by: Aaron Fischer <mail@aaron-fischer.net>

There are a lot of go dependencies that appear old and we should update them.

The following packages have been updated:

* codeberg.org/gusted/mcaptcha

* github.com/markbates/goth

* github.com/buildkite/terminal-to-html

* github.com/caddyserver/certmagic

* github.com/denisenkom/go-mssqldb

* github.com/duo-labs/webauthn

* github.com/editorconfig/editorconfig-core-go/v2

* github.com/felixge/fgprof

* github.com/gliderlabs/ssh

* github.com/go-ap/activitypub

* github.com/go-git/go-git/v5

* github.com/go-ldap/ldap/v3

* github.com/go-swagger/go-swagger

* github.com/go-testfixtures/testfixtures/v3

* github.com/golang-jwt/jwt/v4

* github.com/klauspost/compress

* github.com/lib/pq

* gitea.com/lunny/dingtalk_webhook - instead of github.com

* github.com/mattn/go-sqlite3

* github/matn/go-isatty

* github.com/minio/minio-go/v7

* github.com/niklasfasching/go-org

* github.com/prometheus/client_golang

* github.com/stretchr/testify

* github.com/unrolled/render

* github.com/xanzy/go-gitlab

* gopkg.in/ini.v1

Signed-off-by: Andrew Thornton <art27@cantab.net>

Follows: #19284

* The `CopyDir` is only used inside test code

* Rewrite `ToSnakeCase` with more test cases

* The `RedisCacher` only put strings into cache, here we use internal `toStr` to replace the legacy `ToStr`

* The `UniqueQueue` can use string as ID directly, no need to call `ToStr`

Continues on from #19202.

Following the addition of pprof labels we can now more easily understand the relationship between a goroutine and the requests that spawn them.

This PR takes advantage of the labels and adds a few others, then provides a mechanism for the monitoring page to query the pprof goroutine profile.

The binary profile that results from this profile is immediately piped in to the google library for parsing this and then stack traces are formed for the goroutines.

If the goroutine is within a context or has been created from a goroutine within a process context it will acquire the process description labels for that process.

The goroutines are mapped with there associate pids and any that do not have an associated pid are placed in a group at the bottom as unbound.

In this way we should be able to more easily examine goroutines that have been stuck.

A manager command `gitea manager processes` is also provided that can export the processes (with or without stacktraces) to the command line.

Signed-off-by: Andrew Thornton <art27@cantab.net>

There is a bug in the system webhooks whereby the active state is not checked when

webhooks are prepared and there is a bug that deactivating webhooks do not prevent

queued deliveries.

* Only add SystemWebhooks to the prepareWebhooks list if they are active

* At the time of delivery if the underlying webhook is not active mark it

as "delivered" but with a failed delivery so it does not get delivered.

Fix#19220

Signed-off-by: Andrew Thornton <art27@cantab.net>

Co-authored-by: Lunny Xiao <xiaolunwen@gmail.com>

* Add missing `X-Total-Count` and fix some related bugs

Adds `X-Total-Count` header to APIs that return a list but doesn't have it yet.

Fixed bugs:

* not returned after reporting error (39eb82446c/routers/api/v1/user/star.go (L70))

* crash with index out of bounds, API issue/issueSubscriptions

I also found various endpoints that return lists but do not apply/support pagination yet:

```

/repos/{owner}/{repo}/issues/{index}/labels

/repos/{owner}/{repo}/issues/comments/{id}/reactions

/repos/{owner}/{repo}/branch_protections

/repos/{owner}/{repo}/contents

/repos/{owner}/{repo}/hooks/git

/repos/{owner}/{repo}/issue_templates

/repos/{owner}/{repo}/releases/{id}/assets

/repos/{owner}/{repo}/reviewers

/repos/{owner}/{repo}/teams

/user/emails

/users/{username}/heatmap

```

If this is not expected, an new issue should be opened.

Closes#13043

* fmt

* Update routers/api/v1/repo/issue_subscription.go

Co-authored-by: KN4CK3R <admin@oldschoolhack.me>

* Use FindAndCount

Co-authored-by: KN4CK3R <admin@oldschoolhack.me>

Co-authored-by: 6543 <6543@obermui.de>

* Some refactors related repository model

* Move more methods out of repository

* Move repository into models/repo

* Fix test

* Fix test

* some improvements

* Remove unnecessary function

Use hostmacher to replace matchlist.

And we introduce a better DialContext to do a full host/IP check, otherwise the attackers can still bypass the allow/block list by a 302 redirection.

There are multiple places where Gitea does not properly escape URLs that it is building and there are multiple places where it builds urls when there is already a simpler function available to use this.

This is an extensive PR attempting to fix these issues.

1. The first commit in this PR looks through all href, src and links in the Gitea codebase and has attempted to catch all the places where there is potentially incomplete escaping.

2. Whilst doing this we will prefer to use functions that create URLs over recreating them by hand.

3. All uses of strings should be directly escaped - even if they are not currently expected to contain escaping characters. The main benefit to doing this will be that we can consider relaxing the constraints on user names and reponames in future.

4. The next commit looks at escaping in the wiki and re-considers the urls that are used there. Using the improved escaping here wiki files containing '/'. (This implementation will currently still place all of the wiki files the root directory of the repo but this would not be difficult to change.)

5. The title generation in feeds is now properly escaped.

6. EscapePound is no longer needed - urls should be PathEscaped / QueryEscaped as necessary but then re-escaped with Escape when creating html with locales Signed-off-by: Andrew Thornton <art27@cantab.net>

Signed-off-by: Andrew Thornton <art27@cantab.net>